Fake Door Testing - how to prioritize your sprint backlog based on evidence

What the H are Fake Doors and why are they the most powerful tool product teams have access to?

A Product Manager has to manage feature request intake from their stakeholders constantly.

Sales think a feature is needed to close a deal.

Marketing thinks a feature is needed based on their market research.

Customer Support thinks they can reduce churn if only the product has a feature.

The Engineers and User Researchers also have opinions on what to build next.

The Customers are asking for everything.

The Product Manager has the hard job of saying no, to these other lovely humans. Most of the time, it is opinion against opinion, and the loudest voice, authority, or highest-paying customer wins.

Intake and Prioritization

Feature intake and prioritization is the most crucial role of the PM, because if we build the wrong features, it could cost us our job and reputation.

But no matter how much we apply best practices and frameworks for feature prioritization, we run a high risk of building things that the right people will not use.

We can test that the intended audience will use the feature by running a Fake Door Experiment to prevent the wrong things from ending up on the Developer's backlogs.

What is a Fake Door Experiment?

Instead of doing any work on the idea, including even more complex research, we build the minimum necessary interface and place it in front of the user in the natural product environment to see if they interact with it.

This experiment will give us strong evidence to validate the interest in the feature.

Planning a Fake Door Experiment

Validating an idea or two every sprint works well when running Discovery and Delivery in parallel. While the developers are working on the current features, we are validating the next body of work alongside them.

Grooming Sessions

Backlog grooming sessions happen separately from planning sessions so that the team can discuss priorities effectively. It involves the engineering manager, product manager, user researcher, and tech lead.

In a backlog grooming session, we do two things:

Look at data from previous idea validation tests to determine what to build next.

Look at data from the prioritization frameworks to determine what ideas to validate next.

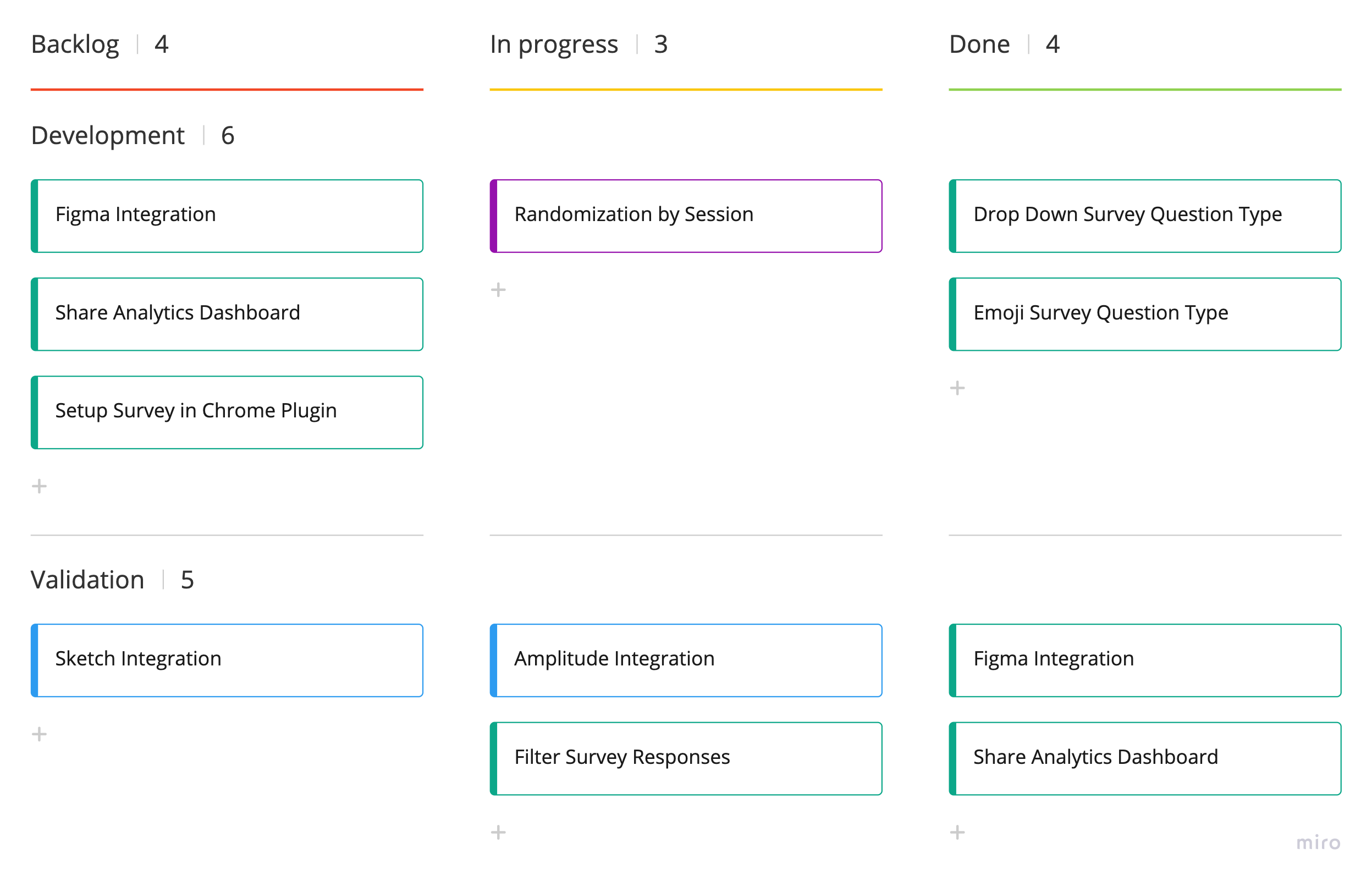

Teams using a kanban board of next, doing, done could go with a swim lane approach:

We add a swim lane for idea validation, containing a list of ideas we need to start testing alongside feature development.

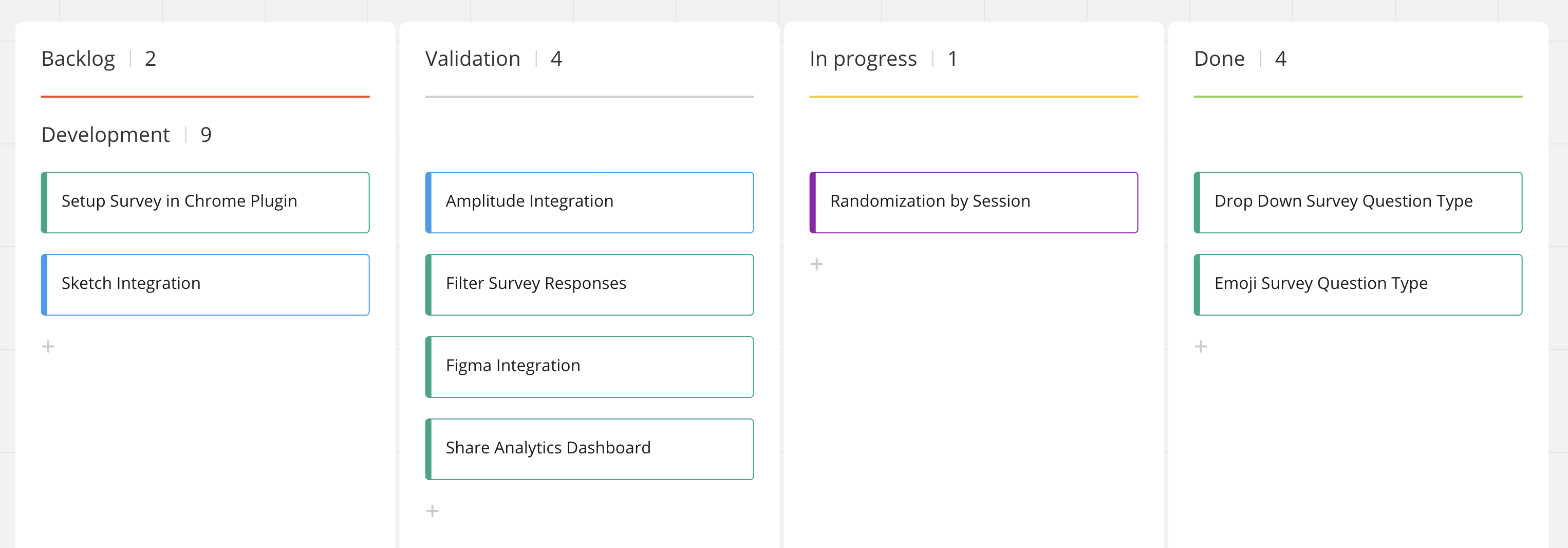

Another option is to add a new column that represents validation, and the items that looks like they can be validated with a fake door test goes into that column:

What is the best approach?

Adding a swim lane is the best method for teams who discover and deliver solutions in parallel. That way validation does not become a blocker.

Planning Sessions

Sprint planning sessions are usually fun. When engineers, UX and PM get together to discuss the next body of work, there can be heated discussions on why and how.

Adding idea validation into our planning sessions, we get the team involved in answering the why for the following sprint.

The team determines what a fake door test should look like and where it is positioned. This is called the design of the experiment. Depending on the experimentation infrastructure, this test can be designed and launched within 5 minutes with a dedicated fake door testing tool such as Samelogic.

Retrospectives

In retrospectives, along with discussing the team's health and what they could have done better, we look at the fake door experiments we are running to see how we could tweak it to get more unbiased and accurate results.

The goal of the retrospective is "how can we learn faster?"

Conclusion

By embedding fake door tests in the product culture, we are able to conduct scientific experimentation without the overhead of expensive tools and frameworks such as AB testing or complex user research methods.

The fake door testing method democratizes the feature prioritization process so everyone can get the evidence to support their opinion.

This cognitive pressure and risk rely not solely on the product manager but on the whole team and stakeholders involved. We can show evidence of why we say yes, no, or later to a feature request.

Related workflows

Move from editorial context into the selector, Playwright, and bug-reproduction pages that turn exact UI evidence into action.