The Passing Era of A/B Testing: An Analysis on its Decline | Samelogic Blog

For years, A/B testing has been a cornerstone in the digital world, a tool designed to analyze and optimize user experience. With the constant evolution of technology, the once-thriving A/B testing landscape has experienced a significant shift. We can see this evident with this sunsetting of Google Optimize. It is vital to explore the reasons behind the perceived decline of A/B testing and understand its implications for data-driven decision making.

The Age of Big Data and AI: A More Complex Landscape

the rise of big data and artificial intelligence (AI) has dramatically transformed how we analyze user behavior. In a world where a multitude of factors contribute to user decisions, A/B testing - a somewhat binary method - can no longer provide comprehensive insights.

A/B testing, which operates on the premise of changing one variable while holding others constant, falls short in big data environments. In contrast, machine learning algorithms are capable of detecting complex patterns and relationships between multiple variables simultaneously, yielding more accurate and actionable insights.

Moreover, AI-powered personalization has made every user's journey unique, making it hard to generalize results from A/B testing. Algorithms learn and adapt in real-time to each user, making it impossible to maintain the "all other things being equal" condition crucial for A/B testing.

The Limitations of A/B Testing: The Quest for Statistical Significance

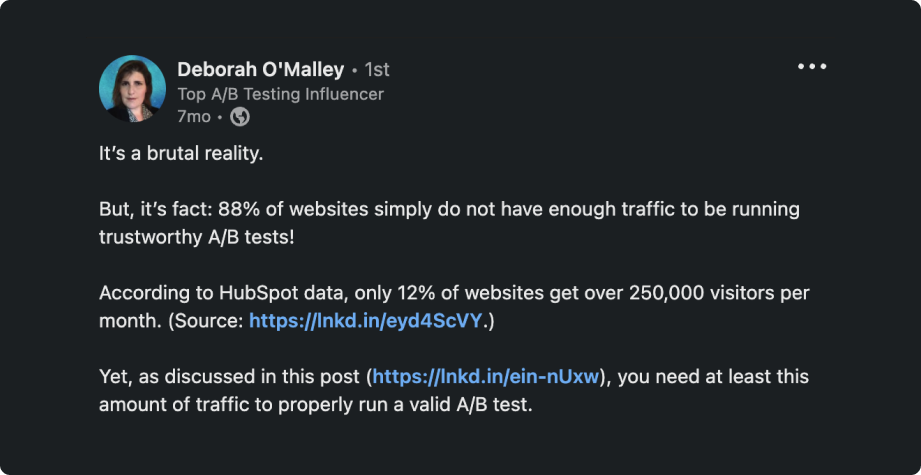

One of the glaring issues with A/B testing is the sample size required to achieve statistical significance. For a reliable A/B test, it's estimated that you need a sample size of around 250,000 users. Such a huge sample size presents a significant barrier for many businesses, especially small and medium-sized companies.

For startups and less trafficked sites, it might take weeks, or even months, to reach the required number of visitors for a single test. This slows down the decision-making process and the speed at which companies can innovate and improve their platforms.

Furthermore, even if you have a large user base, driving a substantial portion of them to participate in the test can be challenging. It requires a meticulously planned and executed marketing strategy, which not all businesses may be capable of or willing to invest in.

In addition, achieving statistical significance doesn't automatically translate to practical significance. For instance, a slight improvement in click-through rate might be statistically significant with a large enough sample size, but it may not have a meaningful impact on overall business outcomes.

The need for a massive sample size, the time and effort required to run the test, and the possibility of insignificant results make A/B testing a less appealing strategy for many companies. Instead, businesses are turning to alternative companies (like Samelogic.com) and methods that can provide more timely and actionable insights for decision making.

Real-time Decision Making and A/B Testing

In our fast-paced digital environment, real-time decision making is crucial. Unfortunately, A/B testing is not designed for real-time insights. It requires time for data collection and analysis. By the time a business can act on A/B test results, the user behavior could have already shifted, making the insights outdated.

Moreover, an inherent flaw in A/B testing is its assumption that the winning variant will remain superior. The digital landscape is dynamic, and today's winning design might not be effective tomorrow. With real-time decision making becoming essential, A/B testing's limitations are more evident.

The Fallacy of Quantitative Focus

A/B testing is inherently quantitative. It measures clicks, conversion rates, and other numerical data. However, this focus on quantitative data omits the qualitative aspects of user experience.

User experience is more than just numbers. It involves understanding why users behave the way they do. A/B testing tells you what users are doing, but it often falls short in explaining why they are doing it.

As user experience becomes increasingly important in the digital world, understanding user motivations, preferences, and pain points is crucial. Therefore, methods that combine both quantitative and qualitative data are gaining popularity over A/B testing.

The Future of A/B Testing

The shift away from A/B testing is reflective of the changing digital landscape. As the world moves towards big data and AI, real-time decision making, a combined approach of quantitative and qualitative analysis, and ethical considerations become increasingly important.

While A/B testing has its merits, its limitations have become more pronounced in today's digital environment. Businesses and digital marketers must evolve their data analysis methods to keep up with these changes. Understanding why A/B testing is experiencing a decline is the first step in this evolution.

This is not to say A/B testing is entirely obsolete. Rather, its dominance as a standalone optimization tool is receding, making way for more advanced approaches that combine various data sources and use AI-driven analysis. These methods take into account the dynamic nature of user behavior and provide more nuanced insights, tailored to the complexities of today's digital landscape.